The headline shorts Google’s algorithm considerably, but you get the idea—information veracity is not a major algo ranking factor today and Google wants to change that, according to New Scientist. Speaking only as an SEO industry member, this claim is important because it represents a huge shift in ranking factors. The change clearly has broader implications beyond SEO, but that’s a topic for another post. Today I’m talking only about search traffic and the SEO business.

The New Scientist article opens with a tagline about quality, “[t]he trustworthiness of a web page might help it rise up Google’s rankings if the search giant starts to measure quality by facts, not just links.” Again, every SEO knows Google doesn’t measure quality by just links, but we’ll grant New Scientist some ignorance on the algo. What interests me is how factuality will be included in the algo. More on that later.

[blog_stripe]Let’s look at the New Scientist article and parse each point through an SEO lens. Being a science publication, its lens is clearly that of an institution interested in data and academic accuracy. These are all good things, but they tell only part of the story. Here’s a play-by-play of the article. It reads:

Google’s search engine currently uses the number of incoming links to a web page as a proxy for quality, determining where it appears in search results. So pages that many other sites link to are ranked higher. This system has brought us the search engine as we know it today, but the downside is that websites full of misinformation can rise up the rankings, if enough people link to them.

Understating the algo by several hundred factors, yada yada, but it’s a good point. As far as we know, Google’s algo does not currently care whether or not a page’s info is accurate—only whether it’s popular and provides a good experience for the reader. I think nearly every SEO-minded nerd out there understands by now that Google cares about a page’s trustworthiness and that they’re in the business of showing searchers the “right” answer to each of their queries. But now they’re allegedly changing how they determine right.

A Google research team is adapting that model to measure the trustworthiness of a page, rather than its reputation across the web. Instead of counting incoming links, the system – which is not yet live – counts the number of incorrect facts within a page. “A source that has few false facts is considered to be trustworthy,” says the team (arxiv.org/abs/1502.03519v1). The score they compute for each page is its Knowledge-Based Trust score.

I want more information on this “Google research team.” I was able to identify the authors of the above-linked paper, Knowledge-Based Trust: Estimating the Trustworthiness of Web Sources, and most of them are Google researchers and employees. I don’t doubt the claim that they’re a “Google research team.” I just want to know more about them.

But this is where it starts to get interesting for me: “…measure the trustworthiness of a page, rather than its reputation across the web. Instead of counting incoming links…” (emphasis mine). I don’t think the reputation measurement—the Knowledge-Based Trust score (KBT)—will replace reputation measurement. I think it has to work along with reputation.

[/blog_stripe]An example

Let’s say I google “do vaccines cause autism?” Right now, Google does a great job displaying results that report the correct answer: no, they don’t (this is not up for debate, by the way. Go home, antivaxxers. You’re stupid). Despite the many incorrect voices out there trumpeting their false claim that the MMR vaccine or some ingredient therein causes autism in children, Google sees through it and provides results repeating the truth: there is no connection between vaccines and autism. (I like the second result particularly: howdovaccinescauseautism.com.)

So in this case, the factuality of the answer is already there. Google is showing delivering factual information to its users. It seems that today, that factual delivery may be a happy accident. I’m sure they’re working on making it more than just an accident, but you get my point. Since they’re still researching KBT, they have not yet implemented it. Facts are just as likely to appear in SERPs as hogwash and malarkey.

[blog_stripe]A better example

What about questions with less agreement? How about climate change or “GMO facts?” Genetically-modified organisms are a hot topic on the web lately. The health industry loves to rail against them and anyone who defends them. It’s a complex topic with heated passions and scary-looking science words. It’s not something the typical layperson is qualified to speak on. And that’s exactly the point.

Everybody eats, so the topic is relatable. “Genetically-modified” is an alarming term. It’s vague and just sciencey-enough to impact to laypeople. But what does it really mean? For that, you have to ask a qualified scientist. And before I border on making an argument from authority I’ll point out that (a) I’m not a food scientist or molecular biologist or geneticist and (b) being one of those things doesn’t automatically make someone right. So the debate rages on, mostly between people who are unqualified to speak as authorities. Entitled to opinions, sure. But can you really back up that opinion? How does a layperson educate themselves on a complex topic? Most of the time they Google it. So now Google is left with the responsibility to provide people with the right answer. And here we are again.

What’s the right answer about GMOs? Are they safe? Can I feed them to my kids? Will Vani Hari picket my house if I buy them at the store? Are the Girl Scouts killing young girls with their evil gluten? The scientific community is in overwhelming agreement that GMOs are perfectly safe, and in some cases safer than non-GMO food. But the non-GMO crowd is loud and persuasive. So Google has an SEO problem. If The Non-GMO Project can rank #1 and be flat-out wrong about the question, what’s Google to do?

[/blog_stripe]How facts factor into SEO

Google something. The algo looks at hundreds of factors to generate your results. We’re going to add one factor to that equation: factuality. How goes factuality affect the other existing factors? Well, if you’re googling something like “GMO facts,” the process will have to look like this:

- Receive query: “Are GMOs safe?”

- Determine factual answer: “YES!”

- Locate results that reflect factual answer

- Apply remaining factors of popularity, reputation, experience, locale, etc.

- Profit.

If the question has a factual answer (i.e. it’s not just an opinion), that determination has to be made before the popularity contest begins. Sure, Google can still jump through the localization and language hoops, but a fact about GMOs or MMR vaccine or global warming is a fact in Paris, Beijing, Johannesburg, and Boston. Google will have to insert its KBT factor early in the algo and let the traffic flow to the right answer. As for which right answer, well that’s good old SEO all over again.

[blog_stripe]Knowledge Vault

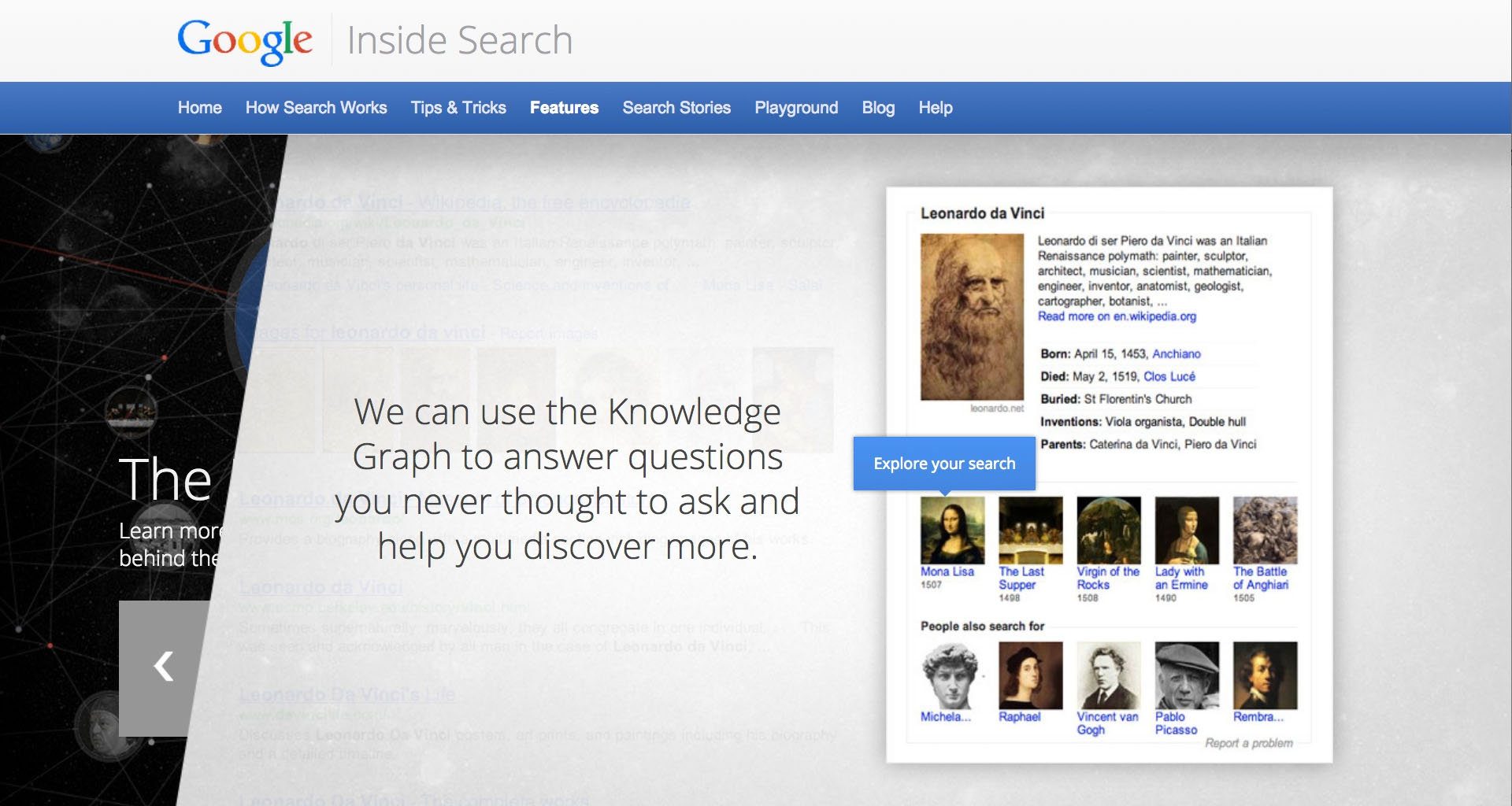

It’s the secret sauce behind factuality. Google is amassing a staggering collection of facts. They already have one, the Knowledge Graph, but they’re expanding and improving it with the vault. New Scientist wrote in August that the vault is a fully-automated, human-free system. At the time, the vault contained 1.6 billion facts, 271 million of which are “confident facts,” information with a 90% chance or better of being correct—that’s about 17%. They are, of course, working on improving that percentage.

The SEO impact of the Knowledge Graph is already well-documented. Anytime Google can deliver information to users without passing off those users to another website, it’s good for Google (and probably users) but bad for publishers. The Knowledge Vault appears to make matters worse for publishers.

[/blog_stripe]About Google’s research

For now, the paper is published on arXiv. I had never heard of arXiv, so it was a learning experience for me. It’s a repository operated by Cornell University containing preprints of scholarly journals. They’re preprints because they have not been published in any journal or publication yet. Most will be published. Some stay on arXiv exclusively.

Google doesn’t offer any announcement that I could find describing the research. New Scientist seems to be driving the conversation here. There’s already some discussion happening on Reddit, but not in SEO circles. The best I could do was look up the contributors to the paper to see what their bios could tell me. I’m no computer scientist, but these folks’ résumés are seriously impressive.

The paper’s contributors:

- Xin Luna Dong

- Evgeniy Gabrilovich

- Kevin P. Murphy

- Van Dang

- Wilko Horn

- Camillo Lugaresi

- Shaohua Sun

- Wei Zhang

Further reading

- Knowledge-Based Trust: Estimating the Trustworthiness of Web Sources [PDF]

- Google wants to rank websites based on facts not links – New Scientist, Feb 28, 2015

- Google’s fact-checking bots build vast knowledge bank – New Scientist, Aug 20, 2014

- Will answers from Google’s Knowledge Vault steal your SEO content clicks? – Brafton News, Aug 20, 2014